Qualitative and Quantitative Research: Can A Marriage Finally be Consummated?

© Howard Gardner 2026

When I was a beginning doctoral student in psychology—sixty years ago—there was a relatively sharp line between two kinds of research. One was strictly experimental: carefully selected and controlled samples and conditions, data readily analyzed, and often revealing clear cut, hopefully replicable results. The other approach was “softer”—focused on observation, testimony, and dialogue between investigator(s) and the population of interest. “Subjects” were fewer in number but more carefully probed. Analysis of data and reporting of findings proved more challenging than was the case with clearly quantitative research.

The difference between these strands of investigation is captured well in the work of Jean Piaget—widely regarded as the founder of the cognitive strand of developmental psychology. Much of Piaget’s work in early childhood was based on informal experiments conducted with his three young children—Jacqueline, Lucienne and Laurent. His work with school-aged children involved larger samples; but the interventions were quite soft…typically informal, loosely scripted conversations with the subjects, and sometimes a quite restricted subject pool. (Example: Piaget’s studies of moral judgment were carried out entirely with boys.)

Lest it appear that I am placing my thumb on the quantitative side of the contrast, quite the contrary! By temperament (and, also, to some extent by training), I find myself more attracted to the softer, qualitative branch of the discipline. Indeed, I’m as much interested in problem-finding as in problem-solving. I value live interactions, give-and-take with human subjects across the age span, replete with flexibility and—quite often—intriguing surprises. And while content to do simple forms of data analyses, I prefer that findings be quite clear from a small pool…rather than dependent on a large and carefully controlled population and at times extensive statistical manipulations.

Thirty years ago, in conjunction with psychologists William Damon, Mihaly Csikszentmihalyi, and other valued colleagues, I embarked on larger scale studies of adult professionals. I certainly appreciated the advantages of sizeable samples and more carefully controlled interventions. Still, my colleagues and I continued to favor research that featured flexible conversations, rather than strict scripts and tightly controlled (e.g., multiple choice) responses. And then, fifteen years ago, when Wendy Fischman and I began working in American colleges, we gathered far larger samples (e.g., 500 college freshmen, 500 graduating seniors). But we continued to use “semi-structured” interviews for data collections—guided conversations, rather than surveys consisting of precisely worded items with answers selected from carefully formulated multiple choices. We believe that we are more likely to discover what is really happening when we let individuals formulate their own thoughts and we can clarify them as appropriate.

With respect to the kind of research that my colleagues and I have favored over the last thirty years: one huge challenge! Even after conversations have been transcribed, analysis of the data is tremendously time consuming. No multiple choices, no measuring of pulse rate or tracking of eye movements or blinks. Rather, two or more coders need somehow to convert the verbatim transcripts into a small number of discrete categories and then achieve inter-rater reliability. It verges on magic!

A concrete example: Let’s say that we ask 500 incoming students what they believe is the mission, the purpose, of the college in which they are enrolled, and we encourage these students to reflect and then respond informally. Once we have an accurate transcript of the conversation in hand (and on paper!), we need to create a manageable number of response categories and achieve agreement across raters on what we have actually heard from our subjects and their apparent intended meaning(s). Should the raters fail to agree, they need to summon a third rater. The trio then discusses discrepancies until they achieve some kind of resolution (which occasionally involves negotiation or soliciting yet another opinion.)

In our initial collegiate study, five years of data collection led to over two years of data analysis and frequent inter-rater consultations. Even then, we frequently had to be satisfied with only 80% agreement across coders.

To complexify the terrain yet further: In the study just mentioned, let’s say we repeat the same question—“What’s the purpose of college?”—to college seniors. Of course, if the seniors give the same kinds of answers as the freshman, no problem! But we found that seniors often approach such questions quite differently from students earlier in their matriculation. It’s vital to capture and categorize those possibly salient differences while still allowing across-age and across-stage comparisons.

How much simpler research could be if one just used multiple choices, or reaction time, or eye blink, or brain waves, or some other readily quantifiable measure rather than conversations—Piaget-style, or those carried on in the tradition of our decades-long “Good Work” enterprise.

But there’s another side:

In our own studies of colleges, had we used only multiple-choice formats, we would likely have missed important developments. For instance, when we began the study in 2012, we had no idea that mental health issues would loom so large. In a set of multiple choices about problems on campus, we might well not even have included that option. Similarly, in 2015, we had not anticipated that a feeling of belonging (or not belonging) would register so vividly on the screen of various constituencies. And, of course, in 2012, few could have anticipated the power of social media, or the seismic effects of the election (and re-election) of Donald Trump, or the consequences of a pandemic that lasted for years.

Perhaps the most troubling finding from our college study was the striking lack of agreement—within and across subject groups—about the mission(s) of their respective institution of higher learning. This finding only emerged gradually after years of reading and contemplating the testimony of approximately two thousand subjects. Back in 2012, when the study was launched, we could never have anticipated the particular configuration of responses that emerged.

Full stop.

When I first studied psychological research methods (60 years ago)—and, indeed, when we first began our studies of good work in the professions (30 years ago)—it was not possible (at least for most mere mortals) to anticipate how the type of research that I’ve personally favored could have been radically streamlined. The gulf between quantitatively and qualitatively oriented research approach was quite sharp and seemingly unfordable. (But see the thoughtful comment below from Leonard Saxe.)

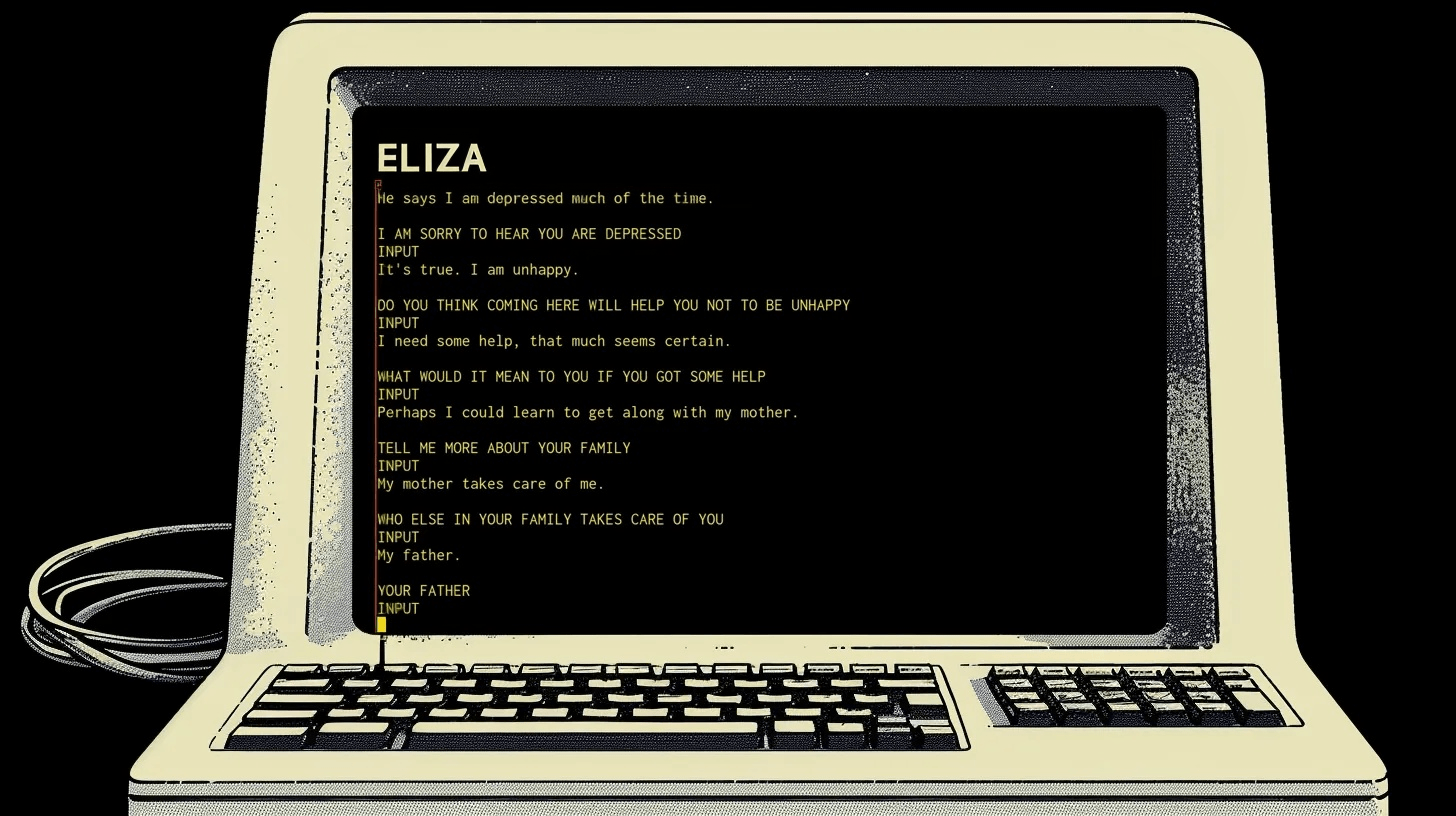

Example of a conversation using the ELIZA chatbot (1966)

But with the advent of Large Language Models (LLM), the terrain may be radically simplified and smoothened. It’s already clear that much of the coding of conversational transcripts (each of which can easily run a dozen single-spaced pages) can be done reasonably well by such instruments—80% agreement between LLM and human coders seems well within reach. As responsible researchers, we would continue to do some coding ourselves but the lion’s share of the coding could be done reliably by LLMs. And though it does not elate me to say this: the kinds of person-to-person interviews that we (and others in the Piagetian-tradition) have used for decades—initially in-person, now often via Zoom or an equivalent platform—could also be done competently by a Large Language Instrument, a psychological version of a well-informed and sympathetic Alexa…or, to go back a half century, to ELIZA, as devised by pioneering computer scientist Joseph Weizenbaum.

Perhaps it may even be possible to have the best of two worlds: open-ended conversations with individuals drawn from a given population (be it professionals across different vocations or diverse denizens on a college campus) and reliable scoring of these conversations—establishing firm and replicable empirical results.

There remain questions for those who conceive of these studies—the comfort of “subjects” in conversing with a computational system, the issue of responsibility for the analysis of data, the task of placing the findings within the broader scholarly literature…and (ultimately) in forms that make sense as well to the general public. Perhaps all of this will be done by LLM—in which case, we can close down our universities and research institutions and just play soccer, ping-pong, or bridge…or create and exercise entirely new forms of cognition. (I hope that these dystopic outcomes will not occur—but my crystal ball is no less clouded than that of my readers.)

Of course, there are challenges—confidentiality, costs, consequences—but also opportunities for training that can capture the best of both quantitative and qualitative approaches to research. And I’m pleased to say that, thanks to the support of the Kern Family Foundation, our research team is exploring these opportunities.

REFERENCES

Fischman, W. and Gardner, H. (2022). The real world of college. MIT Press.

Gardner, H., Csikszentmihalyi, M, Damon,W. (2001). Good work: when excellence and ethics meet. Basic Books.

Weizenbaum, J. (1976). Computer power and human reason: from judgment to calculation. W.H. Freeman and Company.